Request a Demo

Send us a request for an online demonstration at the time that's convenient for you. We will give you an overview and answer any questions you may have about the system.

By clicking the button, you agree that you have read our Privacy Policy

CSAT, CES, NPS: WHICH METRICS TO USE TO MEASURE CUSTOMER SATISFACTION

Alexandra Shiryaeva

Chief Customer Officer at UseDesk

Jokes aside, it's time to get serious about the metrics. You have a customer support department, and it seems to be working fine. Someone answers the customers' questions and resolves the issues, but you cannot reflect this in your reports or reports of the company because you do not have any statistics and exact data. To measure or not to measure the customer support department's performance is not the question. You need to measure it! Otherwise, you will never know what needs to be improved and what you can be proud of. Well, you may become aware of this at some point accidentally (and, most likely, it will be an unpleasant surprise).

Several metrics represent the department's state and its productivity, and it is up to a manager to select the ones to use and prioritize them. The list is long: the response time, the resolution time, the length of the chain of those involved in solving the problem, the effectiveness of the tools used internally by the support team, and so on. The most important of them is the score of your customers' satisfaction. If they are satisfied, then it is less critical whether your response time correlates with the standards commonly applied in customer service. It is essential that the customers feel comfortable with your response time. For example, if your operators answer within 30 minutes instead of 10, the client receives a full, precise, friendly, and thoughtful answer. The customer provides positive feedback, then making the response time a priority is unreasonable.

Once you have decided that a satisfied customer is your number one priority, a new task arises: now you have to decide which metric to use and how to use it. Even with the customers' satisfaction, it is not that straightforward.

The three basic metrics are always replaced on the top of the charts of the metrics recommended for customer service use. All three aim to explore how the customer lives with your product and support, but they have nuances.

Several metrics represent the department's state and its productivity, and it is up to a manager to select the ones to use and prioritize them. The list is long: the response time, the resolution time, the length of the chain of those involved in solving the problem, the effectiveness of the tools used internally by the support team, and so on. The most important of them is the score of your customers' satisfaction. If they are satisfied, then it is less critical whether your response time correlates with the standards commonly applied in customer service. It is essential that the customers feel comfortable with your response time. For example, if your operators answer within 30 minutes instead of 10, the client receives a full, precise, friendly, and thoughtful answer. The customer provides positive feedback, then making the response time a priority is unreasonable.

Once you have decided that a satisfied customer is your number one priority, a new task arises: now you have to decide which metric to use and how to use it. Even with the customers' satisfaction, it is not that straightforward.

The three basic metrics are always replaced on the top of the charts of the metrics recommended for customer service use. All three aim to explore how the customer lives with your product and support, but they have nuances.

CSAT (Customer Satisfaction Score)

It is essential and, probably, the most popular metric. You ask the customer – 'how do you ...?' The question can be related to anything, and its purpose is to get an accurate evaluation of a particular case. For example, you ask the customer – 'How do you feel about the conversation with the support operator?', and give the option to select the answer: excellent, ok, nasty.

Some experts believe that this does not reflect customer loyalty and questions are too specific; however, this is a perfect metric if you are only interested in the support quality.

Some experts believe that this does not reflect customer loyalty and questions are too specific; however, this is a perfect metric if you are only interested in the support quality.

SIDE-NOTE

CSAT metrics are pre-built into the ticketing systems. The screenshot above is an example Usedesk form. How it works: you set up the evaluation form in just a few clicks. Then the customer can evaluate the operator of your customer support team right after the conversation is over. Easy as one-two-three, and incompetent answers or dissatisfied customers will not be able to hide from you anymore.

CES (Customer Effort Score)

The bottom line is to find out how easy it was for the customer to independently resolve the issue/problem and how easy it was to contact the support. Theа developers of the metric had as their primary goal getting the results that would allow to evaluate customers' comfort and satisfaction in general rather than evaluate their communication experience. Ideally, there should be no communication at all (good support is support which customers do not use because they have all the tools to overcome the difficulties).

There are two versions of the CES available:

There are two versions of the CES available:

Example of CES survey

1. The question about the effort. How much effort did you personally have to put forth into handling your request? (I would change it to "How easy was it to solve the problem?"), It is difficult to translate the question to other languages ('effort' is not always translated from English in the right way). If we used a literal interpretation of the word, the question would be – "How much effort you made," which does not sound polite. The answer is to be given on a 5-point scale, and it has caused some complications because it is not apparent whether 5 is the highest grade or the lowest grade. Thus, the second CES version was introduced.

Example of CES 2.0 survey

2. The organization made it easy for me to handle my issue (the meaning: <organization> made everything to solve the problem quickly). The customer answers either agreeing or disagreeing with the statement. The chance of confusion is minimal, and, thus, the second version has become more popular.

NPS (Net Promoter Score)

Example of NPS survey

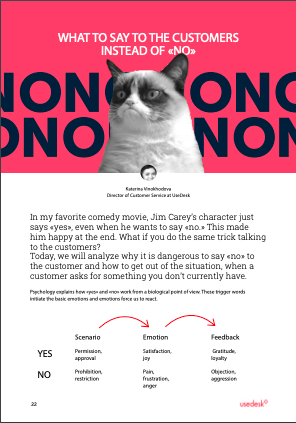

At the peak of interest to the customer service, everyone was talking about NPS – use NPS and, as a result, you will not need any other metrics, your wife will get back to you, and the old scars will disappear. However, there was not much talk about what NPS is.

The main question that NPS asks: how likely would it be for you to recommend a <brand> to friends or colleagues? Usually, the customer is given a scale from 1 to 10 to rate its degree of loyalty.

This is an ideal metric to understand the relationship between the company and the customers in general. This is the best metric to use if you are an analyst or a product specialist. This is an important metric to use if you are not afraid to review the work you've done and correct the mistakes. The metric has little to do with the customer service because the question here does not relate to your operators' competence or accessibility. Instead, it helps to investigate the level of satisfaction with the product.

The main question that NPS asks: how likely would it be for you to recommend a <brand> to friends or colleagues? Usually, the customer is given a scale from 1 to 10 to rate its degree of loyalty.

This is an ideal metric to understand the relationship between the company and the customers in general. This is the best metric to use if you are an analyst or a product specialist. This is an important metric to use if you are not afraid to review the work you've done and correct the mistakes. The metric has little to do with the customer service because the question here does not relate to your operators' competence or accessibility. Instead, it helps to investigate the level of satisfaction with the product.

Which one to choose?

Since there is no standard rule that you need to follow, it is best to try every metric and decide which ones are helpful.

I would say you should use two metrics: CSAT + NPS. Using the first one, you can have a closer look at the performance of the support department. Using the second one, you can have insight into the customers' satisfaction with the product and learn whether they will recommend it. CSAT often contains product reviews, and as they are not relevant to the support performance, they should be excluded from the assessment. NPS should be attractive not only to you but to your product placement specialist also. It can be very entertaining to track the relationship between these two metrics: for example, CSAT grows while NPS falls, or vice versa.

I would say you should use two metrics: CSAT + NPS. Using the first one, you can have a closer look at the performance of the support department. Using the second one, you can have insight into the customers' satisfaction with the product and learn whether they will recommend it. CSAT often contains product reviews, and as they are not relevant to the support performance, they should be excluded from the assessment. NPS should be attractive not only to you but to your product placement specialist also. It can be very entertaining to track the relationship between these two metrics: for example, CSAT grows while NPS falls, or vice versa.

Hidden under a rock

We will discuss the ways to organize and prepare the surveys for the customers later. Now, let's cover some details that are not obvious.

When to ask?

The feedback on the support performance (CSAT) should be reviewed quickly, while the emotions are fresh, and there is a chance to deal with a dissatisfied customer. You have to request feedback no later than 8 hours after the issue has been resolved, and the customer has confirmed he had no questions left (within one working day), and even better – immediately. If the support is provided through a live chat, it is better to send a survey through a live chat and not via email.

Customers evaluate the product, not the support

That is the reason we distinguish NPS from CSAT. As I mentioned before, do not hesitate to exclude any survey related to the product from CSAT.

Feedback

In addition to the option to rate the customer support experience, the customer should leave feedback, and the comments section should be prominent. Periodically, review the comments, react to the claims or negative feedback, and pay attention to the extremely positive comments.

Experiment on the question and answer forms

As soon as you notice that the customers do not actively participate in the survey, do not stumble – change the wording in the question, and edit the answer choices. Often, the questions get more attention than the answers, and it is not fair. Even the effective classical form of "emoticon + description" can have several variations. For example, replace "Average" with "Ok," "Good" with "Excellent," "Bad" with "Terrible," "Excellent" with "Super," and so on. After every change, review the statistics.

What is a good score?

When you collect the first statistics at the end of the month, you may have a question – what do I do now? So, 90% of the customers are happy. Is it good? Is it enough? What about 96%? or 89%?

It is hard to say which score is desired and encouraged as the companies and the customers are different. No one can say what your ideal score is. Obviously, the closer it is to 100%, the better, but it is better to define your scores' gradation. For example, today's daily score is 98-99% of satisfied customers, and the bonuses are given to the customer service department once the score of 96% is achieved. Suppose you ask me why I will not be able to answer. The best way to set the goals is to look at the companies that you respect and like. Please find out how happy their customers are, and set your satisfaction level bar.

Like vs. Dislike

Do not use CSAT with only two options of answer (bad-good, like-dislike). These answers are too broad, and you risk getting the overstated scores because the customers who feel more like "it's ok" will rarely choose "bad" as the answer. Thus, you may miss the fact that some customers have troubles.

When to ask?

The feedback on the support performance (CSAT) should be reviewed quickly, while the emotions are fresh, and there is a chance to deal with a dissatisfied customer. You have to request feedback no later than 8 hours after the issue has been resolved, and the customer has confirmed he had no questions left (within one working day), and even better – immediately. If the support is provided through a live chat, it is better to send a survey through a live chat and not via email.

Customers evaluate the product, not the support

That is the reason we distinguish NPS from CSAT. As I mentioned before, do not hesitate to exclude any survey related to the product from CSAT.

Feedback

In addition to the option to rate the customer support experience, the customer should leave feedback, and the comments section should be prominent. Periodically, review the comments, react to the claims or negative feedback, and pay attention to the extremely positive comments.

Experiment on the question and answer forms

As soon as you notice that the customers do not actively participate in the survey, do not stumble – change the wording in the question, and edit the answer choices. Often, the questions get more attention than the answers, and it is not fair. Even the effective classical form of "emoticon + description" can have several variations. For example, replace "Average" with "Ok," "Good" with "Excellent," "Bad" with "Terrible," "Excellent" with "Super," and so on. After every change, review the statistics.

What is a good score?

When you collect the first statistics at the end of the month, you may have a question – what do I do now? So, 90% of the customers are happy. Is it good? Is it enough? What about 96%? or 89%?

It is hard to say which score is desired and encouraged as the companies and the customers are different. No one can say what your ideal score is. Obviously, the closer it is to 100%, the better, but it is better to define your scores' gradation. For example, today's daily score is 98-99% of satisfied customers, and the bonuses are given to the customer service department once the score of 96% is achieved. Suppose you ask me why I will not be able to answer. The best way to set the goals is to look at the companies that you respect and like. Please find out how happy their customers are, and set your satisfaction level bar.

Like vs. Dislike

Do not use CSAT with only two options of answer (bad-good, like-dislike). These answers are too broad, and you risk getting the overstated scores because the customers who feel more like "it's ok" will rarely choose "bad" as the answer. Thus, you may miss the fact that some customers have troubles.

Do you have any questions or a topic that you would like to learn more about? Please, feel free to reach out at support@usedesk.com – a unique email address, sending emails to which is very much appreciated.

Share with your colleagues:

Did you like this article?

Error get alias

We know a lot about customer service

Once every two weeks, we will send exciting and valuable materials about customer service - articles, cases, and system updates. Do you mind?